Artificial intelligence has become a key part of our lives, but its growing vulnerability poses an imminent danger.

Can you imagine a future where autonomous cars ignore stop signs, AI-based medical diagnoses are incorrect, or automated security systems let the wrong people through?

The risk of attacks on AI systems is real, and the consequences can be chaotic.

In the face of this threat, experts are joining forces in DARPA’s GARD project to ensure the robustness of AI against deception.

This ambitious project seeks to develop algorithms and tools that protect AI systems from manipulation and attacks.

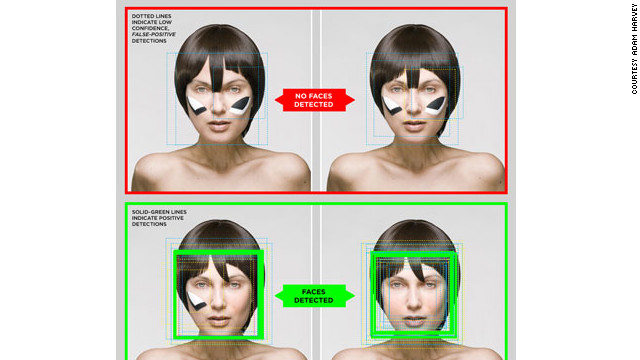

From adversaries capable of deceiving deep learning algorithms to 3D objects that confuse neural networks, various techniques are being explored to strengthen AI security.

The importance of this initiative lies in the increasing dependence on AI in critical sectors such as smart cities, power grids, and healthcare. As AI becomes more omnipresent, the risk of attacks also increases.

The collaboration between DARPA and leading technology companies like IBM and Google ensures the development of strong defenses and evaluation tools to protect current and future AI models.

AI security is crucial to prevent chaos in our lives.

While most of us use AI in non-critical applications, such as movie recommendations on Netflix, the real challenge lies in protecting systems like autonomous vehicles. GARD is working diligently to anticipate future security challenges and ensure that AI is resilient to any form of attack.

The future is at stake, and experts are mobilizing to protect our trust in artificial intelligence.

The GARD project is leading this initiative with the goal of building an impenetrable shield for AI before it’s too late.

Join Facialix’s official channel for more news, courses, and tutorials